MySQL高可用集群之MySQL-MMM

MySQL-MMM原理

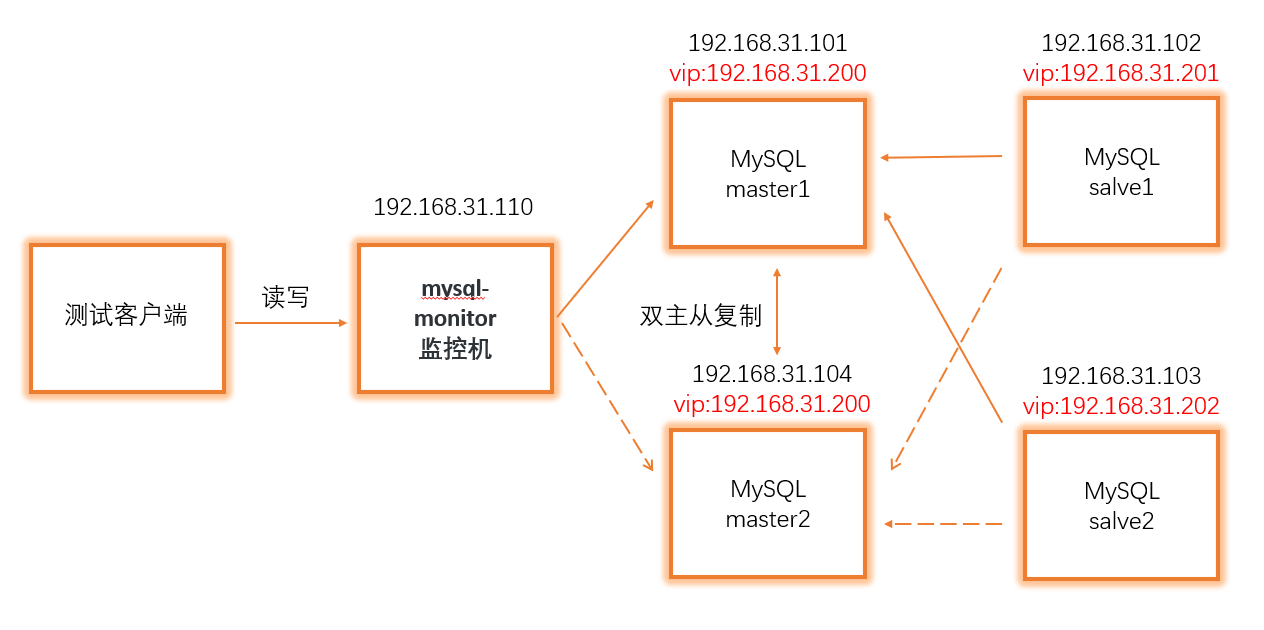

就是两台Master服务器,两台Master服务器实现双主从同步,当一个出现故障,另一个便会顶上去作为正在使用的服务器,需要一个监控机来控制vip虚拟ip的偏移该软件的三个工具

mmm_monitor:监控进程,负责所有的监控工作

mmm_agent:运行在每个MySQL服务器上的代理进程

mmm_control:提供管理mmm_mond进程的命令脚本

MySQL-MMM配置搭建

★准备工作★

一共需要5台服务器

监控机:192.168.31.110

master1:192.168.31.101

master2:192.168.31.104

slave1:192.168.31.102

slave2:192.168.31.103

配置环境如下图所示:

全部服务器安装epel源:

yum install -y epel-release

yum clean all && yum makecache

配置master双主从复制

1.配置master1和master2配置文件

master1(192.168.31.101):

vim /etc/my.conf

---------------------------

server-id = 1

log-bin = master-bin

log-slave-updates = true

relay-log = relay-log-bin

relay-log-index = slave-relay-bin.index

master2(192.168.31.104):

vim /etc/my.conf

---------------------------

server-id = 11

log-bin = master-bin

log-slave-updates = true

relay-log = relay-log-bin

relay-log-index = slave-relay-bin.index

重启master1和master2的mysql服务

systemctl restart mysqld

2.查看两台服务器master_log_file和master_log_pos的值并实现双主从同步

master1(192.168.31.101):

mysql> show master status;

+-----------------+----------+--------------+------------------+-----------------+

|File | Position | Binlog_Do_DB | Binlog_Ignore_DB |Executed_Gtid_Set|

+-----------------+----------+--------------+------------------+-----------------+

|master-bin.000004| 154 | | | |

+-----------------+----------+--------------+------------------+-----------------+

1 row in set (0.00 sec)

master2(192.168.31.104):

mysql> show master status;

+-----------------+----------+--------------+------------------+-----------------+

|File | Position | Binlog_Do_DB | Binlog_Ignore_DB |Executed_Gtid_Set|

+-----------------+----------+--------------+------------------+-----------------+

|master-bin.000001| 154 | | | |

+-----------------+----------+--------------+------------------+-----------------+

1 row in set (0.00 sec)

master1(192.168.31.101):

注意:这里master_log_file和master_log_pos的值取master2的

mysql> grant replication slave on *.* to 'myslave'@'192.168.31.%' identified by '123456';

Query OK, 0 rows affected, 1 warning (0.01 sec)

mysql> change master to master_host='192.168.31.104',master_user='myslave',master_password='123456',master_log_file='master-bin.000001',masterr_log_pos=154;

Query OK, 0 rows affected, 2 warnings (0.01 sec)

重启mysql服务之后查询

mysql> show slave status\G

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.31.101

Master_User: myslave

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: master-bin.000001

Read_Master_Log_Pos: 1196

Relay_Log_File: relay-log-bin.000004

Relay_Log_Pos: 666

Relay_Master_Log_File: master-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

master2(192.168.31.104):

注意:这里master_log_file和master_log_pos的值取master1的

mysql> grant replication slave on *.* to 'myslave'@'192.168.31.%' identified by '123456';

Query OK, 0 rows affected, 1 warning (0.01 sec)

mysql> change master to master_host='192.168.31.101',master_user='myslave',master_password='123456',master_log_file='master-bin.000004',masterr_log_pos=154;

Query OK, 0 rows affected, 2 warnings (0.01 sec)

重启mysql服务之后查询

mysql> show slave status\G

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.31.101

Master_User: myslave

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: master-bin.000004

Read_Master_Log_Pos: 1196

Relay_Log_File: relay-log-bin.000005

Relay_Log_Pos: 666

Relay_Master_Log_File: master-bin.000004

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

配置slave从节点

1.配置文件

vim /etc/my.conf

---------------------------

server-id = 2

relay-log = relay-log-bin

relay-log-index = slave-relay-bin.index

2.主从复制master1

注意:这里master_log_file和master_log_pos的值取master1的

change master to master_host='192.168.31.101',master_user='myslave',master_password='123456',master_log_file='master-bin.000004',master_log_r_log_pos=154;

重启mysql服务查询

slave1(192.168.31.102):

mysql> show slave status\G

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.31.101

Master_User: myslave

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: master-bin.000004

Read_Master_Log_Pos: 154

Relay_Log_File: relay-log-bin.00005

Relay_Log_Pos: 321

Relay_Master_Log_File: master-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

slave2(192.168.31.103):

mysql> show slave status\G

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.31.101

Master_User: myslave

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: master-bin.000004

Read_Master_Log_Pos: 154

Relay_Log_File: relay-log-bin.00005

Relay_Log_Pos: 1411

Relay_Master_Log_File: master-bin.000004

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

安装MySQL-MMM

1.所有的服务器安装MySQL-MMM(一共5台服务器都需要安装)

yum -y install mysql-mmm*

2.所有4台mysql服务器创建授权MMM的账号

#授权monitor用户

grant replication client on *.* to 'mmm_monitor'@'192.168.31.%' identified by 'mmm123456';

#授权agent用户

grant super,replication client,process on *.* to 'mmm_agent'@'192.168.31.%' identified by 'agent123456';

3.所有的服务器配置mmm_common.conf文件(一共5台都需要配置)

vim /etc/mysql-mmm/mmm_common.conf

-----------------------------------------

active_master_role writer

<host default>

cluster_interface eth0

pid_path /run/mysql-mmm-agent.pid

bin_path /usr/libexec/mysql-mmm/

replication_user replicant

replication_password slave

agent_user mmm_agent

agent_password agent123456

</host>

<host db1>

ip 192.168.31.101

mode master

peer db2

</host>

<host db2>

ip 192.168.31.104

mode master

peer db1

</host>

<host db3>

ip 192.168.31.102

mode slave

</host>

<host db4>

ip 192.168.31.103

mode slave

</host>

<role writer>

hosts db1, db2

ips 192.168.100.200

mode exclusive

</role>

<role reader>

hosts db3, db4

ips 192.168.100.201, 192.168.100.202

mode balanced

</role>

4.所有4台mysql服务器上配置mmm_agent.conf文件

vim /etc/mysql-mmm/mmm_agent.conf

----------------------------------------

include mmm_common.conf

# The 'this' variable refers to this server. Proper operation requires

# that 'this' server (db1 by default), as well as all other servers, have the

# proper IP addresses set in mmm_common.conf.

this db1

this db1这里根据mmm_common.conf文件每台ip地址对应的名字改写

5.在监控服务器192.168.31.110上配置mmm_mon.conf文件

vim /etc/mysql-mmm/mmm_mon.conf

---------------------------------------

include mmm_common.conf

<monitor>

ip 127.0.0.1

pid_path /run/mysql-mmm-monitor.pid

bin_path /usr/libexec/mysql-mmm

status_path /var/lib/mysql-mmm/mmm_mond.status

ping_ips 192.168.31.101,192.31.104,192.31.102,192.31.103

auto_set_online 60

# The kill_host_bin does not exist by default, though the monitor will

# throw a warning about it missing. See the section 5.10 "Kill Host

# Functionality" in the PDF documentation.

#

# kill_host_bin /usr/libexec/mysql-mmm/monitor/kill_host

#

</monitor>

<host default>

monitor_user mmm_monitor

monitor_password mmm123456

</host>

debug 0

ping_ips填写上四台mysql服务器的地址

6.启动代理和监控服务

#监控服务器

systemctl start mysql-mmm-monitor

#4台mysql服务器

systemctl start mysql-mmm-agent

测试结果

查看群集情况:mmm_control show

在监控服务器(192.168.31.110)上查看集群情况

[root@test ~]# mmm_control show

db1(192.168.31.101) master/ONLINE. Roles: writer(192.168.100.200)

db2(192.168.31.104) master/ONLINE. Roles:

db3(192.168.31.102) slave/ONLINE. Roles: reader(192.168.100.201)

db4(192.168.31.103) slave/ONLINE. Roles: reader(192.168.100.202)

停掉192.168.31.101这台mysql数据库服务器再查看发现db1处于HARD_OFFLINE(离线状态,如下所示),master2(192.168.31.104)变成写数据的服务器

[root@test ~]# mmm_control show

db1(192.168.31.101) master/ONLINE. Roles: writer(192.168.100.200)

db2(192.168.31.104) master/REPLICATION_FAIL. Roles:

db3(192.168.31.102) slave/ONLINE. Roles: reader(192.168.100.201)

db4(192.168.31.103) slave/ONLINE. Roles: reader(192.168.100.202)

[root@test ~]# mmm_control show

db1(192.168.31.101) master/HARD_OFFLINE. Roles:

db2(192.168.31.104) master/ONLINE. Roles: writer(192.168.100.200)

db3(192.168.31.102) slave/ONLINE. Roles: reader(192.168.100.201)

db4(192.168.31.103) slave/ONLINE. Roles: reader(192.168.100.202)

再启动192.168.31.101这台mysql数据库服务器查看发现挂掉的服务器恢复在线状态(如下所示)

[root@test ~]# mmm_control show

db1(192.168.31.101) master/AWAITING_RECOVERY. Roles:

db2(192.168.31.104) master/ONLINE. Roles: writer(192.168.100.200)

db3(192.168.31.102) slave/ONLINE. Roles: reader(192.168.100.201)

db4(192.168.31.103) slave/ONLINE. Roles: reader(192.168.100.202)

[root@test ~]# mmm_control show

db1(192.168.31.101) master/ONLINE. Roles:

db2(192.168.31.104) master/ONLINE. Roles: writer(192.168.100.200)

db3(192.168.31.102) slave/ONLINE. Roles: reader(192.168.100.201)

db4(192.168.31.103) slave/ONLINE. Roles: reader(192.168.100.202)