K8S集群v1.23.6搭建

前言:k8s集群v1.23.6版本runtime还是docker,过程都在linux系统上搭建,使用kubeadm工具。

kubeadm搭建(V1.23.6,一主多从)

☆准备工作☆

配置hosts文件

vim /etc/hosts

------------------------

192.168.31.101 master

192.168.31.102 node1

192.168.31.103 node2

关闭交换分区

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

CentOS

开启ipvs模块,安装ipset、ipvsadm,修改内核参数,将桥接的IPv4流量传递到iptables的链

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

yum install -y ipset ipvsadm

cat > /etc/sysctl.d/k8s.conf << EOF

net.ipv4.ip_forward = 1

net.ipv4.ip_nonlocal_bind = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

EOF

#避免开启ipv6,如果需要还需要添加以下参数

#net.bridge.bridge-nf-call-ip6tables = 1

#使文件生效

sysctl --system

Ubuntu

在所有节点上加载以下内核模块,为 Kubernetes 设置以下内核参数

modprobe overlay

modprobe br_netfilter

cat > /etc/modules-load.d/containerd.conf <<EOF

overlay

br_netfilter

EOF

cat > /etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

#重新加载上述更改,运行

sysctl --system

CentOS安装

开始安装

1.安装docker(主节点+工作节点)

#配置docker的yum源

yum install -y yum-utils

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#安装docker并启动

yum -y install docker-ce-20.10.9

systemctl start docker && systemctl enable docker

配置docker配置文件

cat > /etc/docker/daemon.json << EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

],

"registry-mirrors": ["https://tdhp06eh.mirror.aliyuncs.com"]

}

EOF

#重启docker,使配置文件生效

systemctl restart docker

2.配置阿里云镜像源(主节点+工作节点)

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

3.安装kubelet、kubeadm、kubectl(主节点+工作节点)

注意:工作节点可以不安装kubectl命令工具

yum install -y kubelet-1.23.6 kubeadm-1.23.6 kubectl-1.23.6

4.主节点初始化(主节点)

查看所需要的镜像

[root@master ~]# kubeadm config images list

I0319 04:39:14.261956 14892 version.go:255] remote version is much newer: v1.26.3; falling back to: stable-1.23

k8s.gcr.io/kube-apiserver:v1.23.17

k8s.gcr.io/kube-controller-manager:v1.23.17

k8s.gcr.io/kube-scheduler:v1.23.17

k8s.gcr.io/kube-proxy:v1.23.17

k8s.gcr.io/pause:3.6

k8s.gcr.io/etcd:3.5.1-0

k8s.gcr.io/coredns/coredns:v1.8.6

编写运行脚本先拉取所需要的镜像

vim k8s-images.sh

-----------------------------

#!/bin/bash

images=(

kube-apiserver:v1.23.6

kube-controller-manager:v1.23.6

kube-scheduler:v1.23.6

kube-proxy:v1.23.6

pause:3.6

etcd:3.5.1-0

coredns:v1.8.6

)

for imagename in ${images[@]} ; do

docker pull registry.aliyuncs.com/google_containers/$imagename

done

#添加执行权限并运行脚本拉取镜像

chmod +x k8s-images.sh

./k8s-images.sh

执行初始化部署主节点组件

注意:如果本地没有镜像,初始化时会自动拉取镜像。

初始化集群命令:

kubeadm init \

--apiserver-advertise-address=192.168.31.101 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.6 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16 \

--ignore-preflight-errors=all

–apiserver-advertise-address:主节点的内网ip地址

–image-repository:镜像仓库

–kubernetes-version:k8s版本

–service-cidr + --pod-network-cidr:网段不重复即可

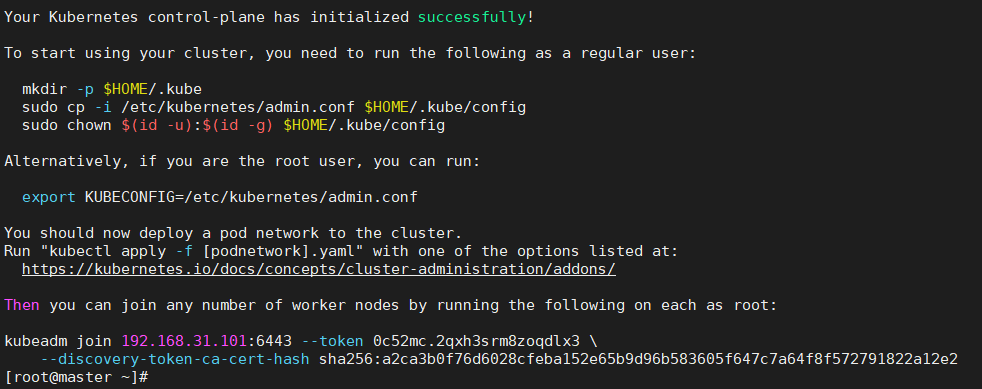

初始化成功后会出现如下图所示:

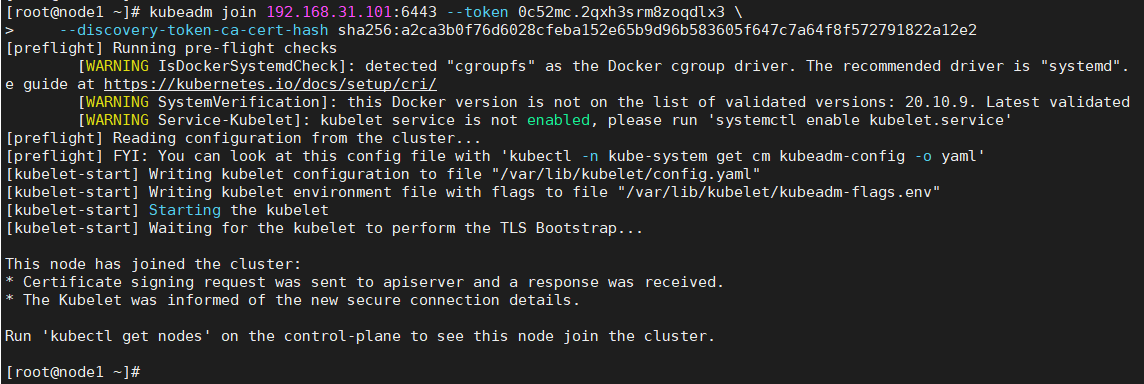

该以下命令在其他node节点执行可以加入到该集群

kubeadm join 192.168.31.101:6443 --token 0c52mc.2qxh3srm8zoqdlx3 \

--discovery-token-ca-cert-hash sha256:a2ca3b0f76d6028cfeba152e65b9d96b583605f647c7a64f8f572791822a12e2

5.配置kubectl客户端

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

6.安装网络插件

安装flannel网络插件

kube-flannel.yml文件内容:

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

seLinux:

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: rancher/mirrored-flannelcni-flannel:v0.18.1

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: rancher/mirrored-flannelcni-flannel:v0.18.1

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

#最后运行apply命令部署插件

kubectl apply -f kube-flannel.yml

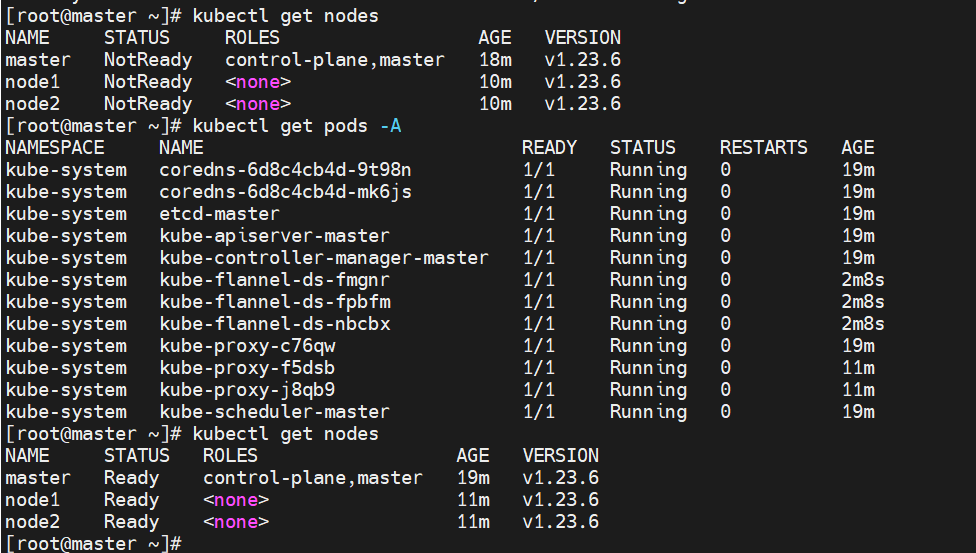

7.测试群集状态

部署好cni网络插件之后了之后发现nodes状态从NotReady变成了Ready(如下图所示)

Ubuntu安装

开始安装

1.安装docker

apt -y install ca-certificates curl gnupg lsb-release

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg

echo "deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" > /etc/apt/sources.list.d/docker.list

apt update

apt -y install docker-ce docker-ce-cli containerd.io

systemctl start docker && systemctl enable docker

配置docker配置文件

cat > /etc/docker/daemon.json << EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://tdhp06eh.mirror.aliyuncs.com"]

}

EOF

#重启docker,使配置文件生效

systemctl restart docker

2.配置apt源

apt install -y software-properties-common apt-transport-https

curl -fsSL https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

add-apt-repository "deb [arch=amd64] https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main"

3.安装kubectl、kubeadm、kubelet

#搜索你要安装的版本

root@master:~# apt-cache madison kubelet kubectl kubeadm |grep '1.23.6'

kubelet | 1.23.6-00 | https://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial/main amd64 Packages

kubectl | 1.23.6-00 | https://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial/main amd64 Packages

kubeadm | 1.23.6-00 | https://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial/main amd64 Packages

#安装kubectl、kubeadm、kubelet

apt install -y kubelet=1.23.6-00 kubectl=1.23.6-00 kubeadm=1.23.6-00

#锁住版本否则自动更新

apt-mark hold kubelet kubeadm kubectl

注意:剩下的步骤和上面CentOS一样,只需要从CentOS第四步依次安装即可

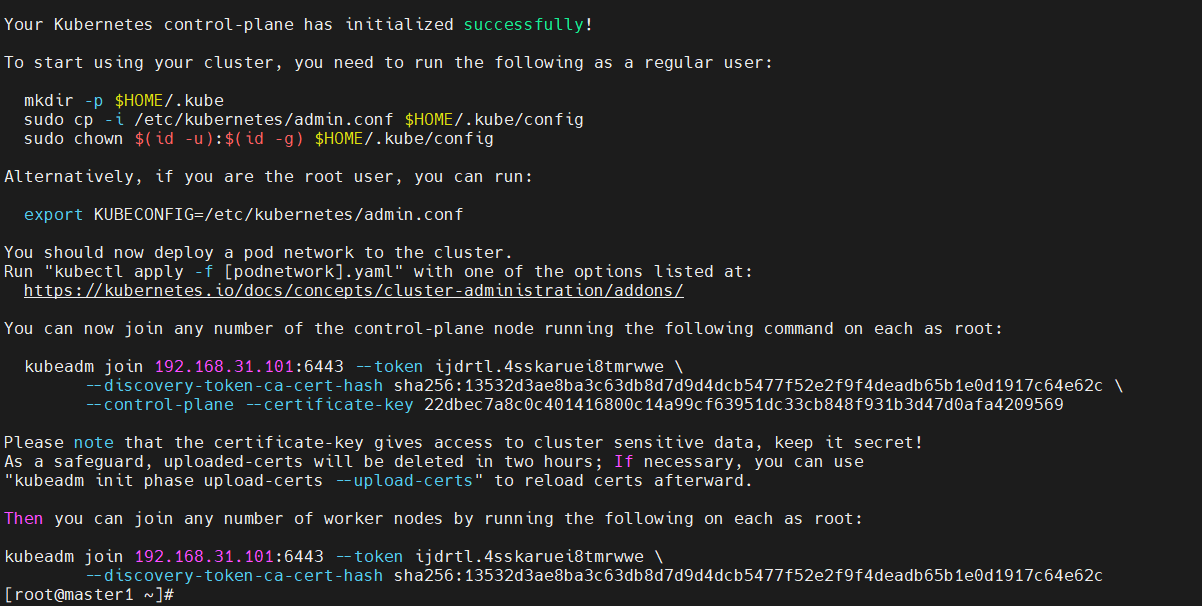

kubeadm搭建(多主多从)

kubeadm init \

--control-plane-endpoint=192.168.31.101:6443 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.6 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16 \

--upload-certs \

--ignore-preflight-errors=all

#添加master节点执行以下命令

kubeadm join 192.168.31.101:6443 --token ijdrtl.4sskaruei8tmrwwe \

--discovery-token-ca-cert-hash sha256:13532d3ae8ba3c63db8d7d9d4dcb5477f52e2f9f4deadb65b1e0d1917c64e62c \

--control-plane --certificate-key 22dbec7a8c0c401416800c14a99cf63951dc33cb848f931b3d47d0afa4209569

#添加node节点执行以下命令

kubeadm join 192.168.31.101:6443 --token ijdrtl.4sskaruei8tmrwwe \

--discovery-token-ca-cert-hash sha256:13532d3ae8ba3c63db8d7d9d4dcb5477f52e2f9f4deadb65b1e0d1917c64e62c

kubeadm节点管理

添加node节点

在master节点查看群集的token和ca证书的值

[root@master ~]# kubeadm token list

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

crx4ot.pkb82hnkdv6t247c 23h 2023-03-20T09:02:37Z authentication,signing The default bootstrap token generated by 'kubeadm init'. system:bootstrappers:kubeadm:default-node-token

[root@master ~]# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

4181d832f9c7c1b383ae63d09afbcdb105e0b2fad0999e906d97b6c9755af4db

然后在需要添加的node节点上执行命令

kubeadm join 192.168.31.101:6443 --token crx4ot.pkb82hnkdv6t247c \

--discovery-token-ca-cert-hash sha256:4181d832f9c7c1b383ae63d09afbcdb105e0b2fad0999e906d97b6c9755af4db

–token:后面跟上查询出来的token值

–discovery-token-ca-cert-hash:后面填写sha256:后面跟上查询出来的cert-hash的字符串

注意:一般token的有效期为24h,如果token过期可以通过以下命令生成添加node节点的命令

[root@master ~]# kubeadm token create --print-join-command

kubeadm join 192.168.31.101:6443 --token n4cob1.k5y9pzmau8g4aoll --discovery-token-ca-cert-hash sha256:4181d832f9c7c1b383ae63d09afbcdb105e0b2fad0999e906d97b6c9755af4db

生成永久不过时的token

只需要加入–ttl 0参数就行

[root@master ~]# kubeadm token create --ttl 0 --print-join-command

kubeadm join 192.168.31.101:6443 --token aji1kz.m8hlr7iwcir48zpw --discovery-token-ca-cert-hash sha256:4181d832f9c7c1b383ae63d09afbcdb105e0b2fad0999e906d97b6c9755af4db

[root@master ~]# kubeadm token list

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

aji1kz.m8hlr7iwcir48zpw <forever> <never> authentication,signing <none> system:bootstrappers:kubeadm:default-node-token

crx4ot.pkb82hnkdv6t247c 22h 2023-03-20T09:02:37Z authentication,signing The default bootstrap token generated by 'kubeadm init'. system:bootstrappers:kubeadm:default-node-token

添加master节点

生成用于新加master节点的kubeadm-certs证书

[root@master1 ~]# kubeadm init phase upload-certs --upload-certs

I0319 09:22:18.658416 8022 version.go:255] remote version is much newer: v1.26.3; falling back to: stable-1.23

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

7c9ca6ad71fb09ff64c52eb2eafe8f3a475ab07282d0d100cf1ac255ee0075de

在master节点查看群集的token和ca证书的值

[root@master1 ~]# kubeadm token list

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

ijdrtl.4sskaruei8tmrwwe 23h 2023-03-20T13:06:51Z authentication,signing The default bootstrap token generated by 'kubeadm init'. system:bootstrappers:kubeadm:default-node-token

tcfm0o.g5lsr82h9gkb8msn 1h 2023-03-19T15:22:20Z <none> Proxy for managing TTL for the kubeadm-certs secret <none>

[root@master1 ~]# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

13532d3ae8ba3c63db8d7d9d4dcb5477f52e2f9f4deadb65b1e0d1917c64e62c

在新的master节点执行命令

kubeadm join 192.168.31.101:6443 --token ijdrtl.4sskaruei8tmrwwe \

--discovery-token-ca-cert-hash sha256:13532d3ae8ba3c63db8d7d9d4dcb5477f52e2f9f4deadb65b1e0d1917c64e62c \

--control-plane --certificate-key 7c9ca6ad71fb09ff64c52eb2eafe8f3a475ab07282d0d100cf1ac255ee0075de

–control-plane --certificate-key:后面跟上新生成master节点的kubeadm-certs证书的key值

环境搭建错误集锦

错误1: [kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp [::1]:10248: connect: connection refused.

解决方法:

cat > /etc/docker/daemon.json << EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

],

"registry-mirrors": ["https://tdhp06eh.mirror.aliyuncs.com"]

}

EOF

#重启docker,使配置文件生效

systemctl restart docker